Here we go with a proper software test! ![]()

So far I’ve run a series of about 40 simulations for three different vehicle configurations. Some of the runs ended up with a crash obviously, but generally speaking results are… well (at least) very good.

I was a bit hesitant due to all these mesh problems, but ultimately I decided to start this topic and present work I’ve done so far. The reason for this was the fact that despite of imperfect mesh the outcomes have started to be repeatable. While playing with the first vehicle’s configuration and changing both mesh and simulation settings I achieved a state where results matched the experimental data. And when I transferred the mesh and simulations settings to two other configurations simulations delivered very good convergence right away. Below I’d like to present the outcomes together with convergence rate and some short comment.

Also, given my experience in different types of simulations, I know that sometimes you are compromised by geometry or/and conditions and you have no choice but accept the situation. In this particular case I’m aware some regions may be very problematic for mesher and later for solver and obtaining desired mesh there is nearly impossible.

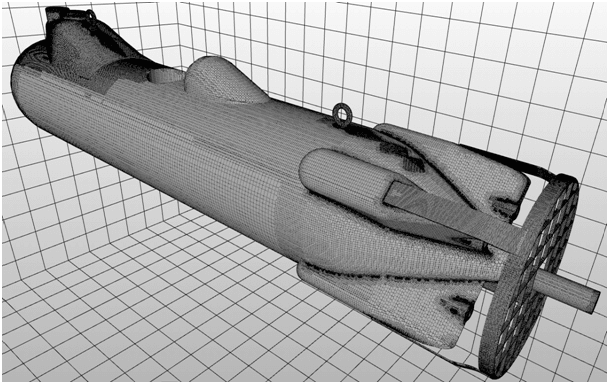

In short: what we have here is an unmanned mine-cleaning underwater vehicle. It is powered in two ways by standard propellers and waterjet drives. Standard propellers are additionally protected by a grill. All the simulations were run for an operational velocity.

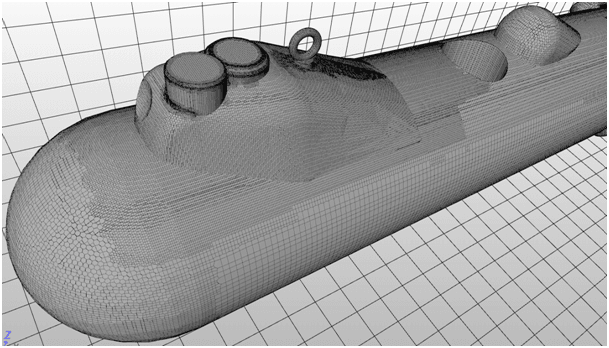

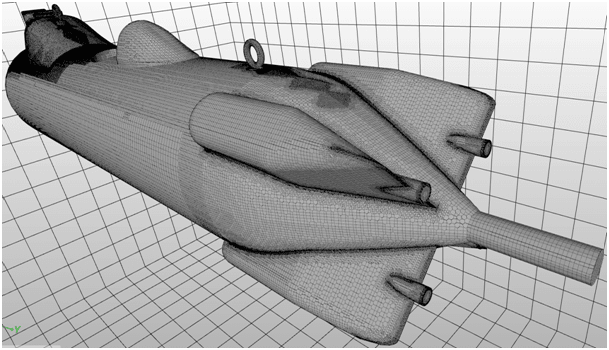

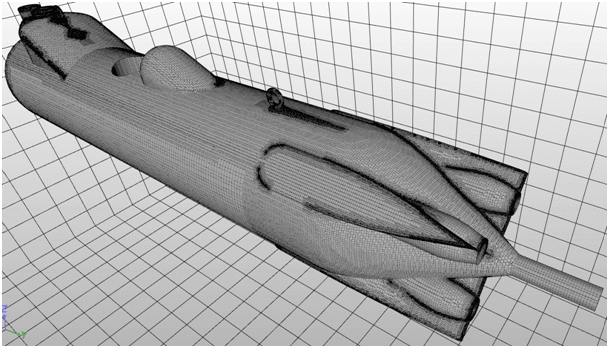

Surface meshes (I left pictures in big format for better view)

ROV Standard Propellers with Grill

aft section

bow section

ROV Standard Propellers

aft section

ROV WaterJet Drives 50

aft section

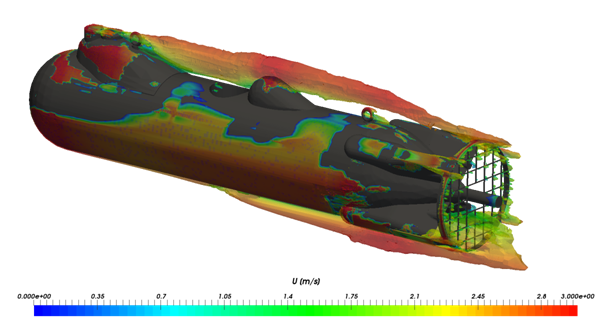

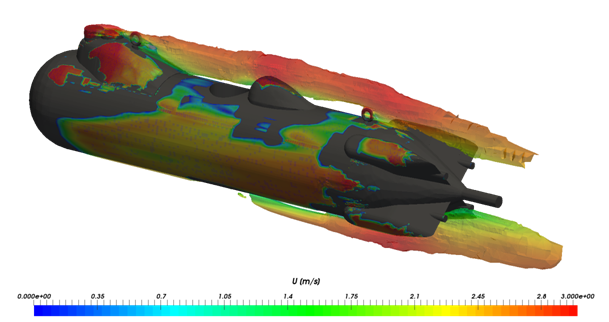

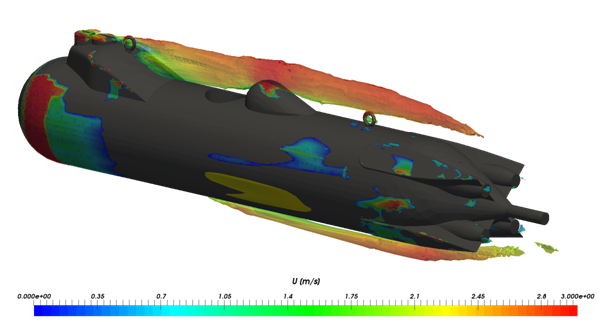

I wasn’t sure how to present outcomes in attractive way. Finally I choose turbulence representation coloured by velocity. I hope it is fine for you.

ROV Standard Propellers with Grill

ROV Standard Propellers

ROV WaterJet Drives 50

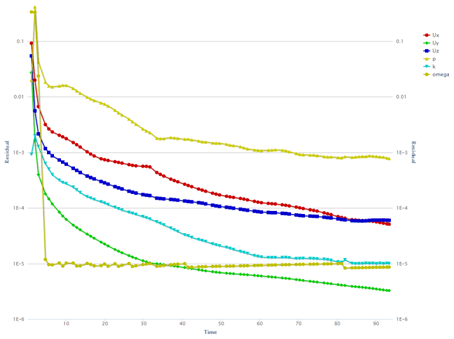

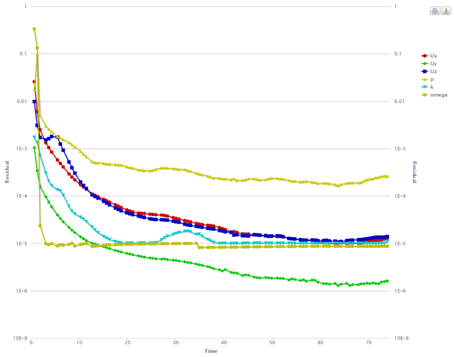

I’d like to add plots too as I have some comments to it:

ROV Standard Propellers with Grill

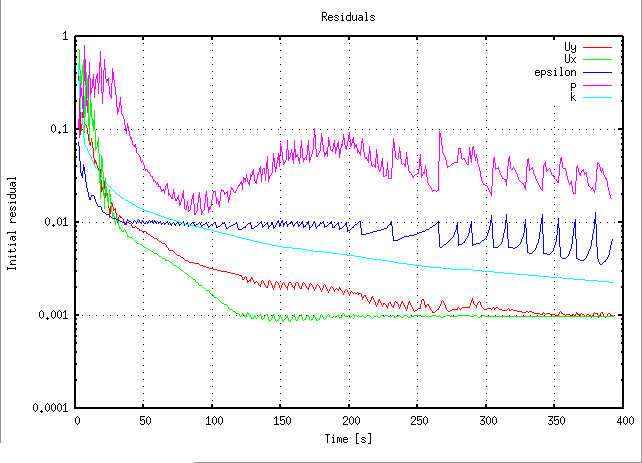

ROV Standard Propellers

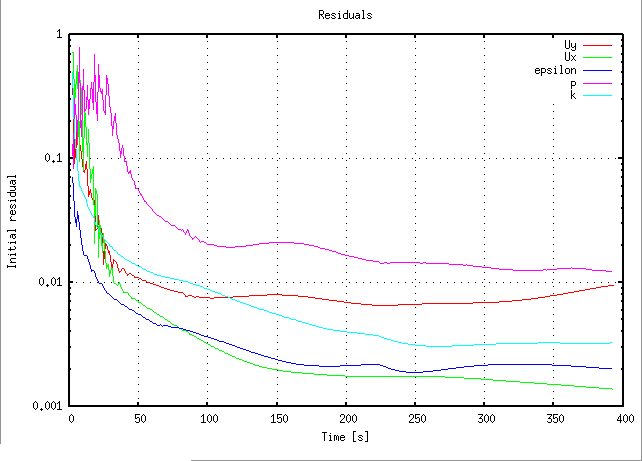

ROV WaterJet Drives 50

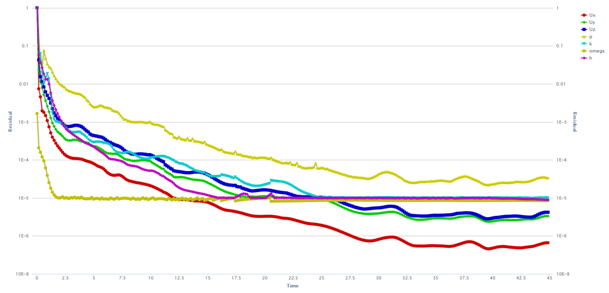

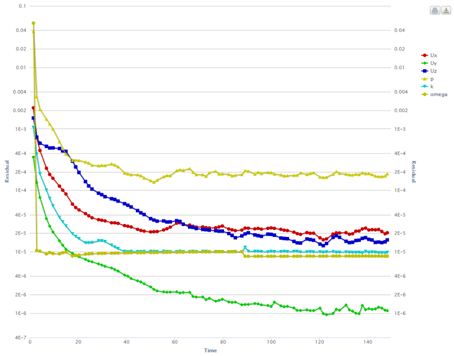

And finally the most important thing ![]() – convergence rate:

– convergence rate:

ROV SP+G -0.2 %

ROV SP -3.8%

ROV WJ50 -%4.7

In conclusion: I’m really happy with the results. Not because the values themselves, but because they proved to be repeatable for different settings. Given the residuals however (which in fact reflect the mesh) I think outcomes for ROV with standard propellers and grill will ultimately be a bit smaller (that is convergence rate will be slightly worse). But generally speaking (writing) overall tendency to gently underestimate vehicle’s drag is proper and should be regarded as positive.

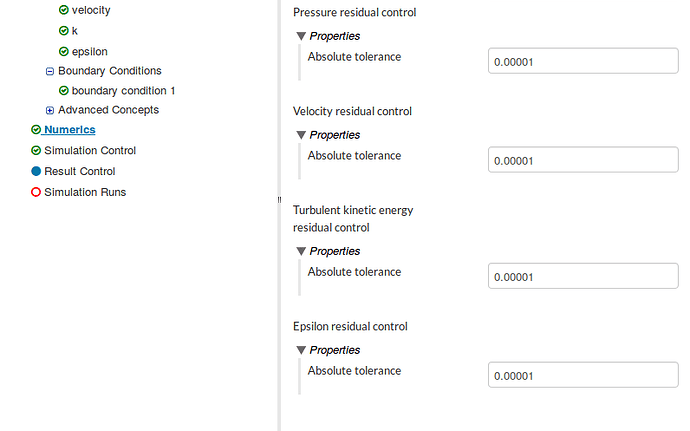

The thing I’d like to work on is better pressure convergence as I found it quite difficult. On some occasions solver suddenly lost stability and case that looked very promising crashed. Of course I know it’s strongly related to the mesh, so I hope improving meshing will automatically improve this.

GENERALLY

- Progress bar and Runtime counter sometimes freeze showing zero for the duration of the simulation.

- I don’t know if I wrote it before, but I really like the residuals plots (!). The only thing I’d like to have here is a possibility of saving it as a picture. Best if I could do it with its unusual resolution – compressed plot sometimes blurs the view and tendency.

- Solver seems to be really solid (!). I would even say that default convergence level set at e-5 is rather strict and e-4 is more than enough. I tested it on simpler cases and I saw no (real) differences.

- Is there a possibility to set convergence rate as a simulation target instead of time? I mean the only target. I get the idea behind ‘time’, but what if time I’d set was just too short. Is there a possibility to start simulation from the point it ended?

- What do you think about this strange mesh assignment I described in this topic? Taming relative meshing | snappyHexMesh - #4 by Maciek Should I worry about it? Or it’s just the way that mesher sometimes divides the geometry?

- Looking at the surface mesh I’m really happy with it. Only this BL…

As you can see the simulations look good at this stage. However if you have any comments or spotted something you think I missed feel free to point it out!

I just thought I could get rid of time factor completely. But I see I should rather set long time for the simulation and control the plots – ok.

I just thought I could get rid of time factor completely. But I see I should rather set long time for the simulation and control the plots – ok.