Jousef, I have done it both ways and nothing makes sense right now.

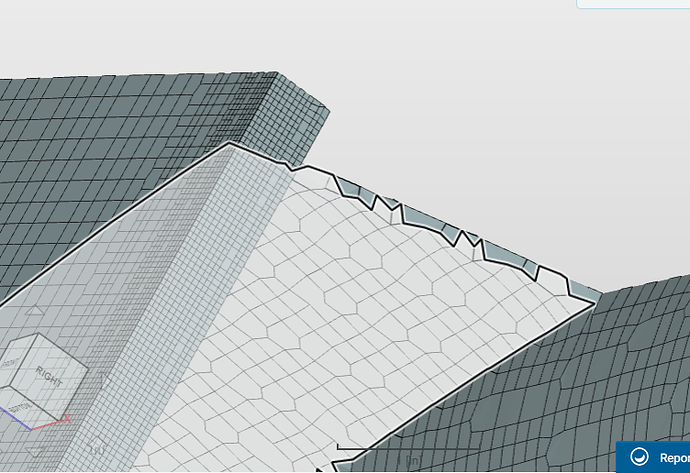

My base mesh that I think the study will say to use as the ‘first steady’ results mesh has ~11,000,000 cells and its converged sim has good yPlus range.

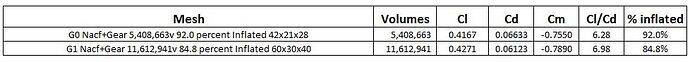

I have made a 5,000,000 cell mesh and a 25,000,000 cell mesh. Only the 5m cells converged to results, the 25m errored and I am not sure why. I guessed that it was because, for the 3 meshes, the weighted % inflated went from 92% 5m to 84.8% 11m and then to 72.2% 25m.

All the study meshes are free of any illegal face etc. and have very clean edges.

Since the 25m failed to converge, and I was so sure that I should tweak refinements to get % layered back up for each mesh, I deleted these first 3 meshes and sims (darn, but I did have some notes about them).

I then tweaked surface refinements to get 3 NEW meshes with 93.2% 5m, 96.2% 10m and 98.4% 22m.

5m and 10m ran to convergence but 22m stopped due to me not having a large enough max runtime  , but it was close to convergence with no oscillations at all. The results had a slight Up,Down,Down trend on Cl, Cd, Cm when the max runtime error halted the run. This is why there are yellow cells in this chart:

, but it was close to convergence with no oscillations at all. The results had a slight Up,Down,Down trend on Cl, Cd, Cm when the max runtime error halted the run. This is why there are yellow cells in this chart:

So, I am very worried about the study, there is no appearance of stable values being reached and I dread having to make the next mesh and sim for a 50,000,000 volume mesh.

Right now I have come to believe that a mesh which is the subject of any Mesh Independence Study SHOULD NOT be layered (and for sure not with an absolute total layers thickness).

The reason I say that is, I believe that ideally for each successive mesh in the study, it should be the previous mesh with each of its cells x,y,z dimensions exactly split in half. That way, there would be no question that each mesh represents a finer meshing of the previous mesh. If a mesh is layered (especially at constant total layers thickness) then the resultant mesh will NOT be a finer representation of the previous one and you should not expect the have results that level off as the meshes get finer. (I hope that someone else understands what I just said  )

)

So, before I do a 50m volume mesh and sim (Ugh!!!), I think I should take the layering off the base mesh and create the other meshes with no changes in refinement levels at double and 1/2 the background mesh x,y,z cells. Then I should plot the results and re-evaluate the situation…

What do you think?? (ugh another monster post  after an allnighter)

after an allnighter)

Dale

AND I am just running out of core hours, I guess there will be a slight delay in this topic…

If it helps, here are the results of two of the first three meshes (that were deleted) and which did converge. (these were NOT tweaked to maintain % inflated):

@1318980 and @Get_Barried HELP

EDIT; Since I did this study I have come to realize that I should not look at Cm as a variable that should be evaluated as a percentage of the previous iteration for MIS study use. The reason is that we can re-map the Cm to any value, including 0, simply by moving the Reference Center if Rotation point that determines the Cm moment arm for any simulation run. So, please ignore the Cm plots…

Let me have a look at it and see what approach might be the best here.

Let me have a look at it and see what approach might be the best here.

. I have these questions now:

. I have these questions now: and make sure that whoever I am talking to about this magic coincidence of apparently precise CFD results validity, also understood that it was a lucky coincidence of the match and that generally CFD is currently capable of only predicting results within a +/- 5 percent range AND only if you really know what you are doing in your CFD setup. Is that a good generalization?

and make sure that whoever I am talking to about this magic coincidence of apparently precise CFD results validity, also understood that it was a lucky coincidence of the match and that generally CFD is currently capable of only predicting results within a +/- 5 percent range AND only if you really know what you are doing in your CFD setup. Is that a good generalization? . This style leads to much re-reading but does have some logic in it, If my sentences were trees and my brackets were branches then I guess I am a spruce tree, perhaps I should try to be a redwood

. This style leads to much re-reading but does have some logic in it, If my sentences were trees and my brackets were branches then I guess I am a spruce tree, perhaps I should try to be a redwood