I didn’t look in detail into it but it seems that you fix a lower temperature on all “surrounding” boundaries and a high temperature at the inner body, right? So to put it casually, you allow air only to leave at the lower temperature but you “heat it up” inside, which could be why the simulation blows up. @Ali_Arafat, what do you think?

sorry, just saw that I never addressed your question. So I have 8GB RAM in my local laptop and a standard graphics chip in there. 14 million cells is a complexity that’s doable with that but it’s not a lot of fun, depending on if you need to interact with it a lot (clipping, slicing, contour plots etc.). More RAM and a powerful graphics card obviously would be better.

Regarding the online post-processor: It’s among the top 3 features we are working on right now. We will gradually ship updates very soon, that will improve both performance and functionality. Once a release date is confirmed, I’ll let you know. However generally, whatever you can cover with result control items, you should as it’s also with the online post-processor much faster.

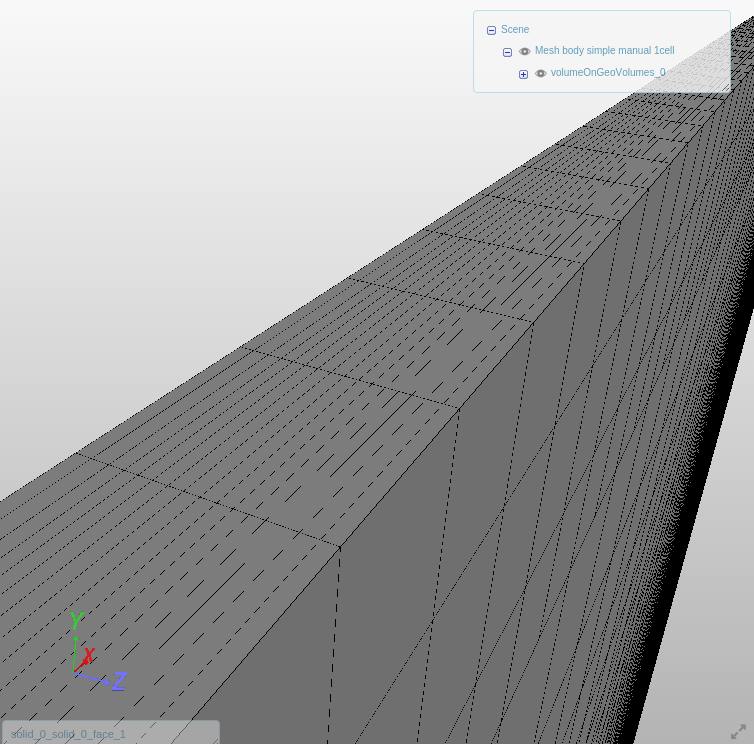

found some time to dig deeper into that project. Please be aware of the fact, that your 2D approach still works with multiple volume cells across the thickness. The hex dominant mesher can only create 3D meshes, this image shows it:

I checked on the setup again and to me it looks very good - as said except for the temperature BCs that are “overconstrained”. I changed that now and started a run - I’ll let you know how it goes.

One thing that appeared to me: The relaxation factors seem to very defensive/low. Is that something you made good experiences with or is it rather because you had divergence problems?

I’ll keep you posted!

David