Can I do anything about this simulation log error message?

CR initialization phase

User exception levee but not intercepted.

The bases are closed.

Non-existent object in open databases: SIM. ORDR!

the object was not created or it was destroyed!

This message is a developer error message.

Contact technical support.

1 Like

Hi @Magain!

Please share the project link with us so that we can have a quick look at this. We will help you out!

Best,

Jousef

Hi @Magain,

the error you posted is actually just a secondary effect of the initial error, which we can find a little earlier in the solver log:

!---------------------------------------------------------------------------------------!

! <JEVEUX_60> !

! !

! Erreur lors de l’allocation dynamique. Il n’a pas été possible d’allouer !

! une zone mémoire de longueur 75302145 (octets). !

! La dernière opération de libération mémoire a permis de récupérer 16928624 (octets). !

!---------------------------------------------------------------------------------------!

This tells you that there is insufficient memory available on the cloud instance to run the job.

Increasing the number of cores to 4 or 8 should work.

Is there any reason why you reduced it to 2 in the first place and you chose the Multfront solver?

The simulation should work fine with the default settings (MUMPS solver + 4 cores) and also be much faster than with Multfront (see my copy of your project here).

Also, this error should have been properly reported, but it seems there was an issue detecting the error code properly. We should have this fixed until tomorrow, so that you get the actual error reason reported in the event log - even in English

As a side note:

- the relatively high memory requirement is caused be the deformable remote force. The deformable remote force and displacement boundary conditions have a relatively large memory footprint which scales with the number of nodes of the assigned entities, as for each connected node additional linear equations are added to the system.

- For a deformable remote displacement / force BC it is generally advised to not use the Multfront solver but rather the default MUMPS or in case an iterative solver is needed, PETSC. We will add a warning message to the user so this gets clear on run creation.

Best,

Richard

2 Likes

On an earlier attempt I saw a message (which appears to have been transient as I could not find it again), that said something about using a different solver and changing the number of cores.

As I was already using MUMPS, and 4 cores is my upper bound, I tried a different solver and less cores in order to try and move forward.

In the absence of understanding, documentation and feed back, all I could do was try changing something.

1 Like

I finally managed to reproduce the message that led me to try a different solver and reduce the number of cores:

The following simulation run(s) failed:

- Run 2

- An error appeared while allocating memory. If you are using the Multfront, LDLDT or GCPC solver, please choose a larger machine by increasing the number of computing cores. For the MUMPS and PETSC solvers you should additionally reduce the number of cores used for the computation to allow a higher RAM per CPU ratio.

Please check the ‘Event Log’ and ‘Solver log’ for more details.

In case of questions, feel free to contact us at support@simscale.com or ask in the Forum.

Please see project 45678/Static13/Run 2 for the settings that (re)produced this error message

(https://www.simscale.com/workbench/?pid=5944892477764956419&mi=run%3A6%2Csimulation%3A13&mt=SIMULATION_RUN)

I’d really appreciate your input on this.

Buk

(Update: Also, If you could look into why Run 3 of the same project didn’t produce any solver log at all?)

Hi @Magain,

ok I see the message is far from ideal. We will change it.

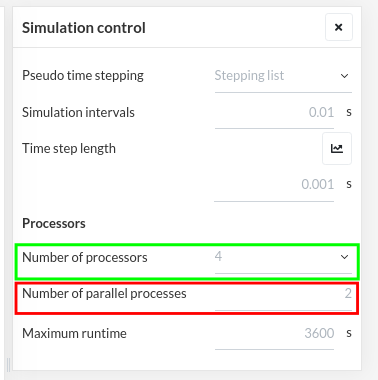

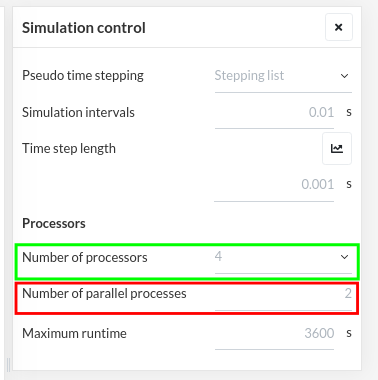

What it actually means is that you should increase the “number of processors” and potentially also reduce the “number of parallel processors” as seen here:

first value defined

first value defined

The defines in which cloud instance your solution will be computed on, so implicitly also the total amount of RAM available. This is then distributed evenly across all parallel processes, so each process will get 1/n times the total RAM if you use n parallel processes.

Obviously if you use more procs in parallel this will increase the simulation speed, but also reduce the RAM available per parallel proc and as the performance never scales linearly, there is kind of a trade-off to make.

We are currently actually working on an automation of this, so in the future you should not be concerned any more.

For now I would say whenever you see this message, double the “number of procs” and leave the “number of parallel procs” as they were - so from 4 - 2 you switch to 8 - 2.

I hope this helps.

Regarding run 3, I will need to check more in depth, it seems to be a specific issue with the 1-core instance.

Best,

Richard

1 Like

Hey, to fix warzone dev error 5573 you are supposed to follow these steps. n the start, turn off your PS4. When it has powered down, click and hold the Power button until your console beeps multiple times. Now release the power button after the second beep and connect your controller with a USB cable, then tap the option that says PlayStation. Here in the Safe Mode menu, choose the option that says Rebuild Database, as you can also think about removing and reinstalling Warzone altogether.

first value defined

first value defined